Manual vs automated website screenshots: why folders don't scale

It happens all the time: you need to track changes on a competitor's website, keep an eye on your own site after each deploy, or monitor a supplier's pricing page that could update without warning. The first thing that comes to mind is just taking a screenshot — open the site, hit Print Screen, save the file. The next day, do it again and compare the two images side by side. For a one-off check, that works fine. But try doing this for a week straight, and the real problems start showing up fast.

We've been through this ourselves before building Snapshot Archive. The pattern is always the same: you start motivated, keep it up for a few days, and then life gets in the way. In this article we'll walk through both approaches — manual and automated — and show you the exact point where manual monitoring falls apart and why.

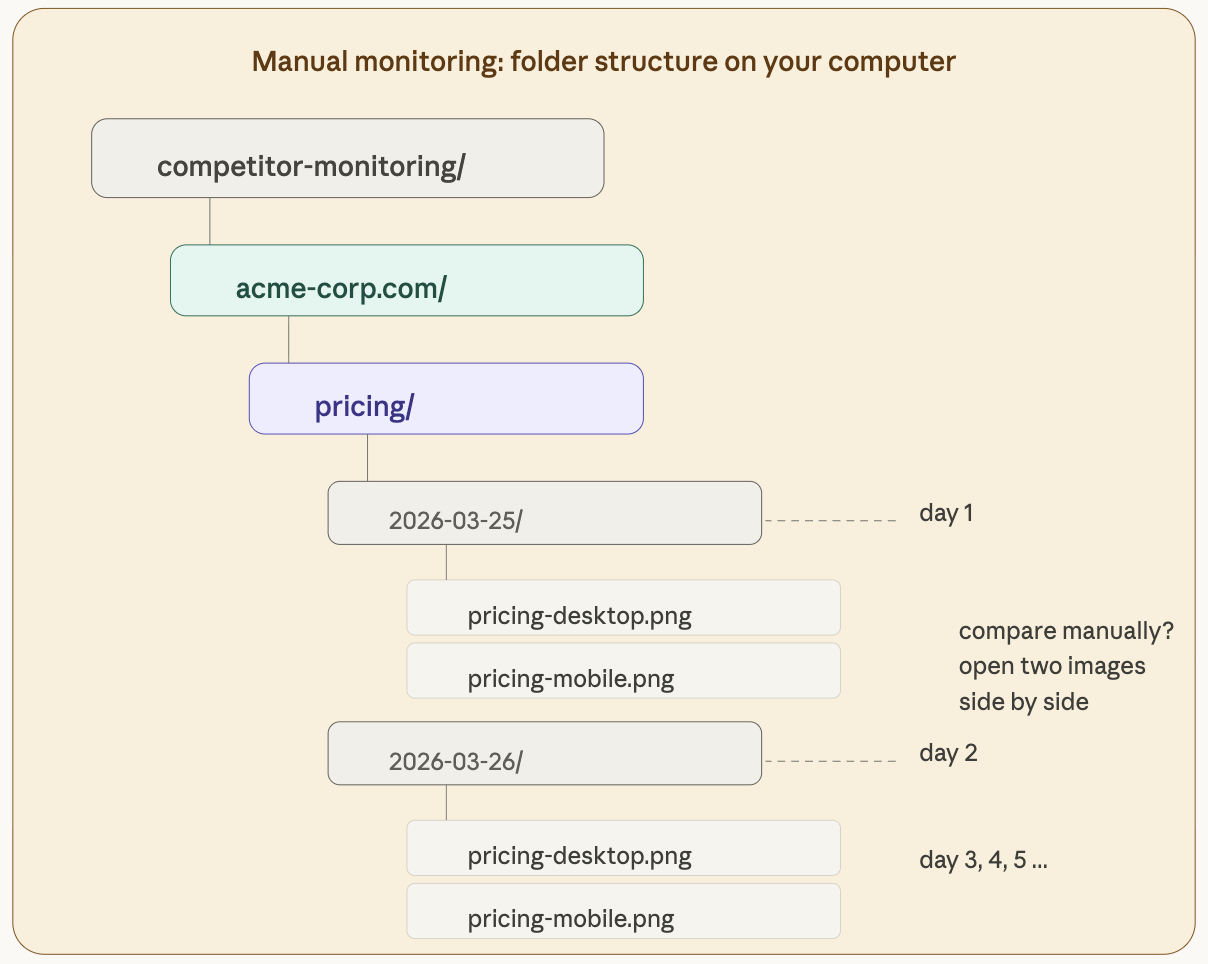

The manual way: folders, dates, and two monitors side by side

Manual monitoring usually looks something like this. You create a folder on your computer, name it after your competitor. Inside, you make subfolders by date: 2026-03-25, 2026-03-26, and so on. In each subfolder you save screenshots: pricing-desktop.png, homepage.png.

For the first few days, this works well enough. You open two files side by side, squint at them, try to spot what's different. But nobody keeps this up for long, and the reasons are always the same.

You forget to take the screenshot. Monday you're buried in another project. Tuesday it slips your mind entirely. Wednesday you remember, but it's already late afternoon and you're not sure if the page changed in the morning. Before you know it, your archive has gaps — and you have no idea what happened on the days you missed. A competitor could have tested a new pricing structure for 48 hours and reverted it, and you'd never know it existed.

Eyeballing is unreliable even when you do remember. The big changes jump out when you put two screenshots next to each other: a new section, a different color, a missing block. But the small stuff flies under the radar completely. Did the price change from $29 to $31? Did the button text get updated from "Start free trial" to "Get started"? Did they quietly remove one row from the pricing comparison table? On a long page with dozens of elements, these things are easy to miss — and they're often the changes that matter most.

Folders turn into chaos surprisingly quickly. After a month you have 30 subfolders. After three months, 90. Finding a specific screenshot from a specific date becomes a task in itself. And if you're monitoring multiple websites, the folder structure grows to the point where navigating to the right file takes longer than the comparison itself. We've seen people maintain spreadsheets just to track which folders contain which screenshots — at which point you're building a homemade monitoring system out of Excel, and not a very good one.

And then there's the notification problem, or rather the complete absence of one. You only find out about a change when you decide to check. If your competitor updated their pricing on Friday evening, you won't see it until Monday morning — and even then, only if you remember to open the right folder and compare the right pair of files.

The manual approach works in exactly one scenario: when you need a one-off screenshot for a report or a presentation. For ongoing monitoring — the kind where missing a change has real consequences — it doesn't hold up.

The automated way: add a URL and forget about it

Automated monitoring works on a completely different principle. You set everything up once, and then the system runs on its own — no weekends off, no breaks, no forgotten screenshots. Let's walk through the entire process from sign-up to the first result, using Snapshot Archive as an example.

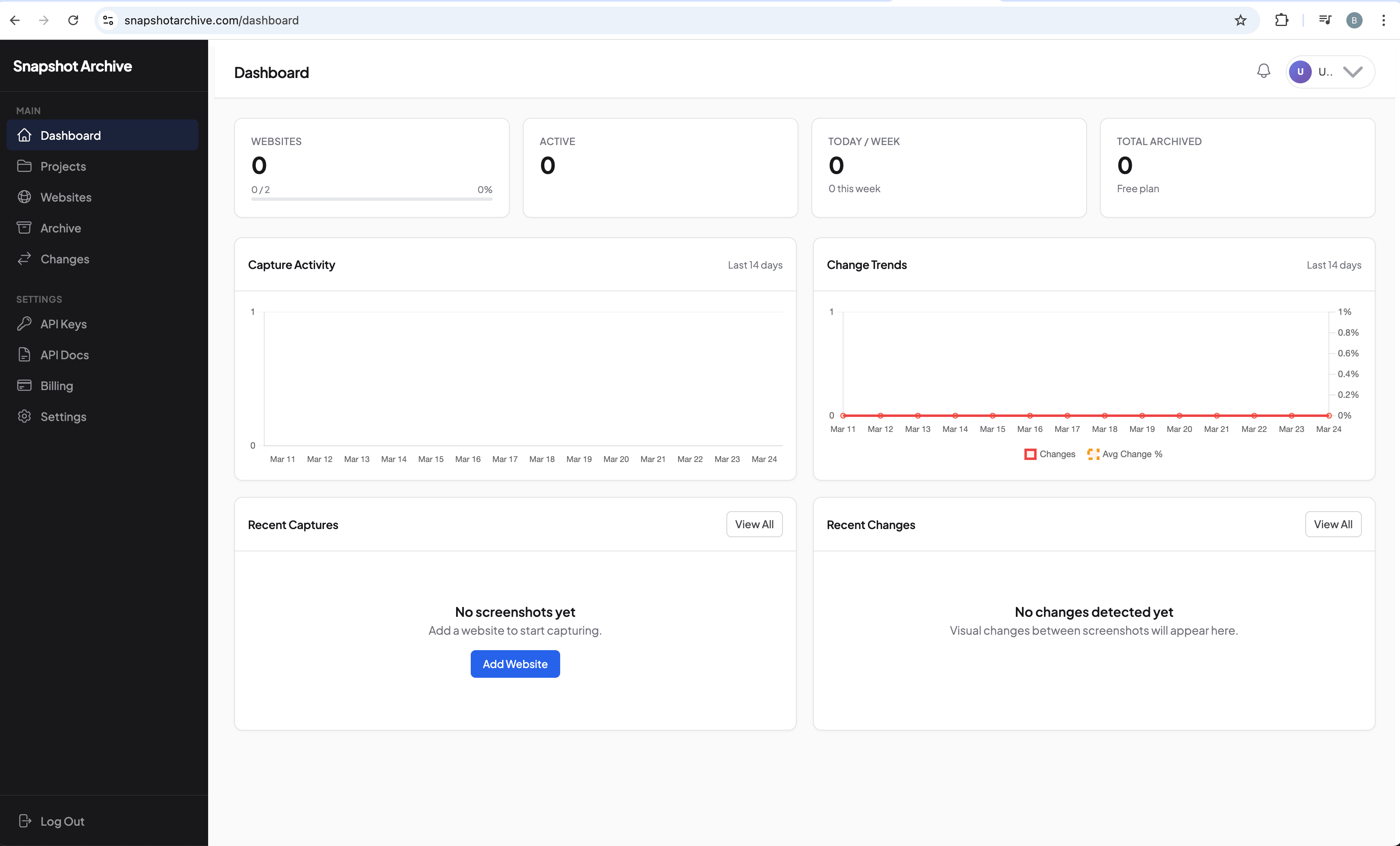

Step 1: the dashboard after sign-up

After signing up, you land on a clean dashboard with all your stats at zero: websites monitored, screenshots taken today and this week, total archive size. This is where everything will live once you start adding URLs.

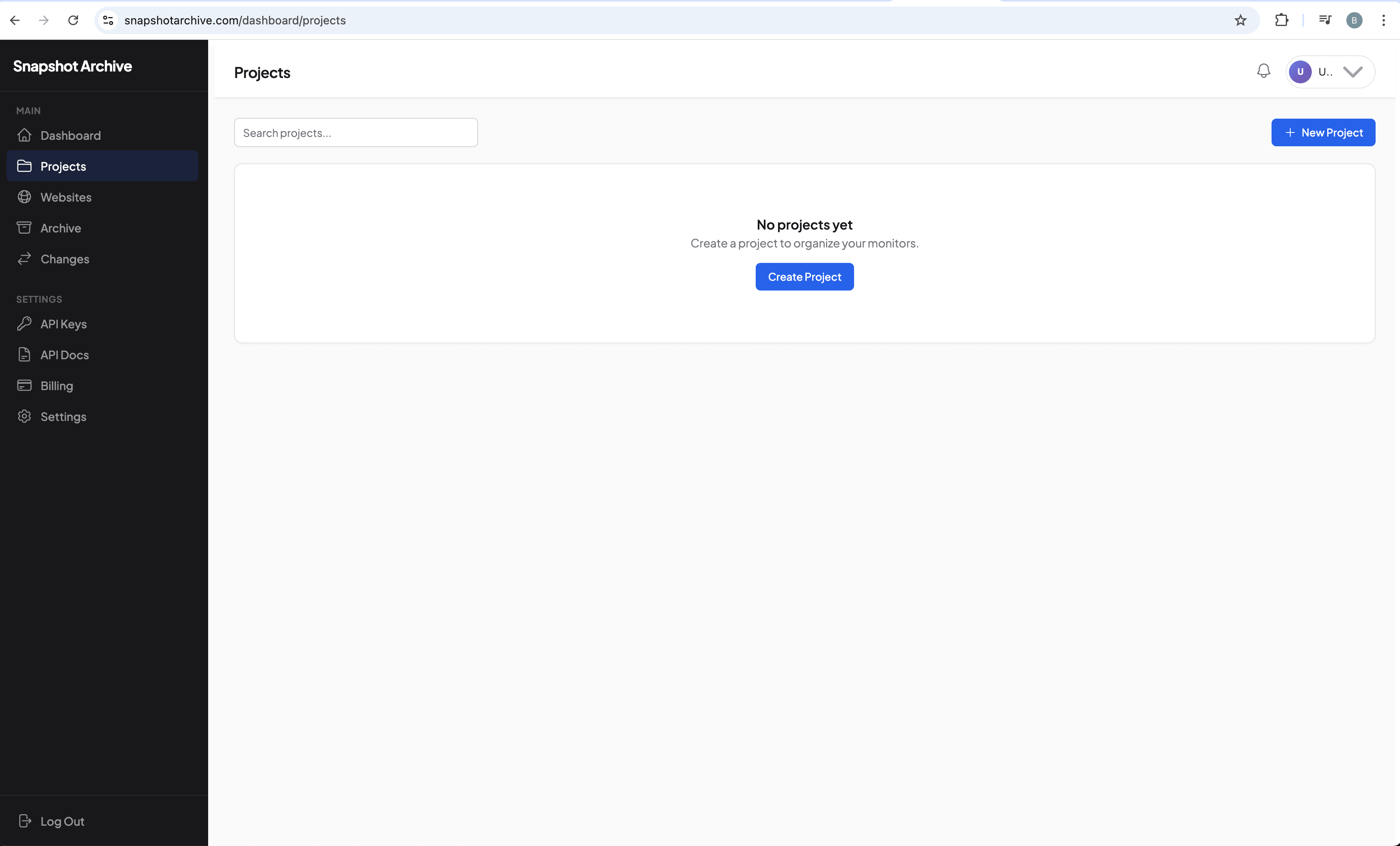

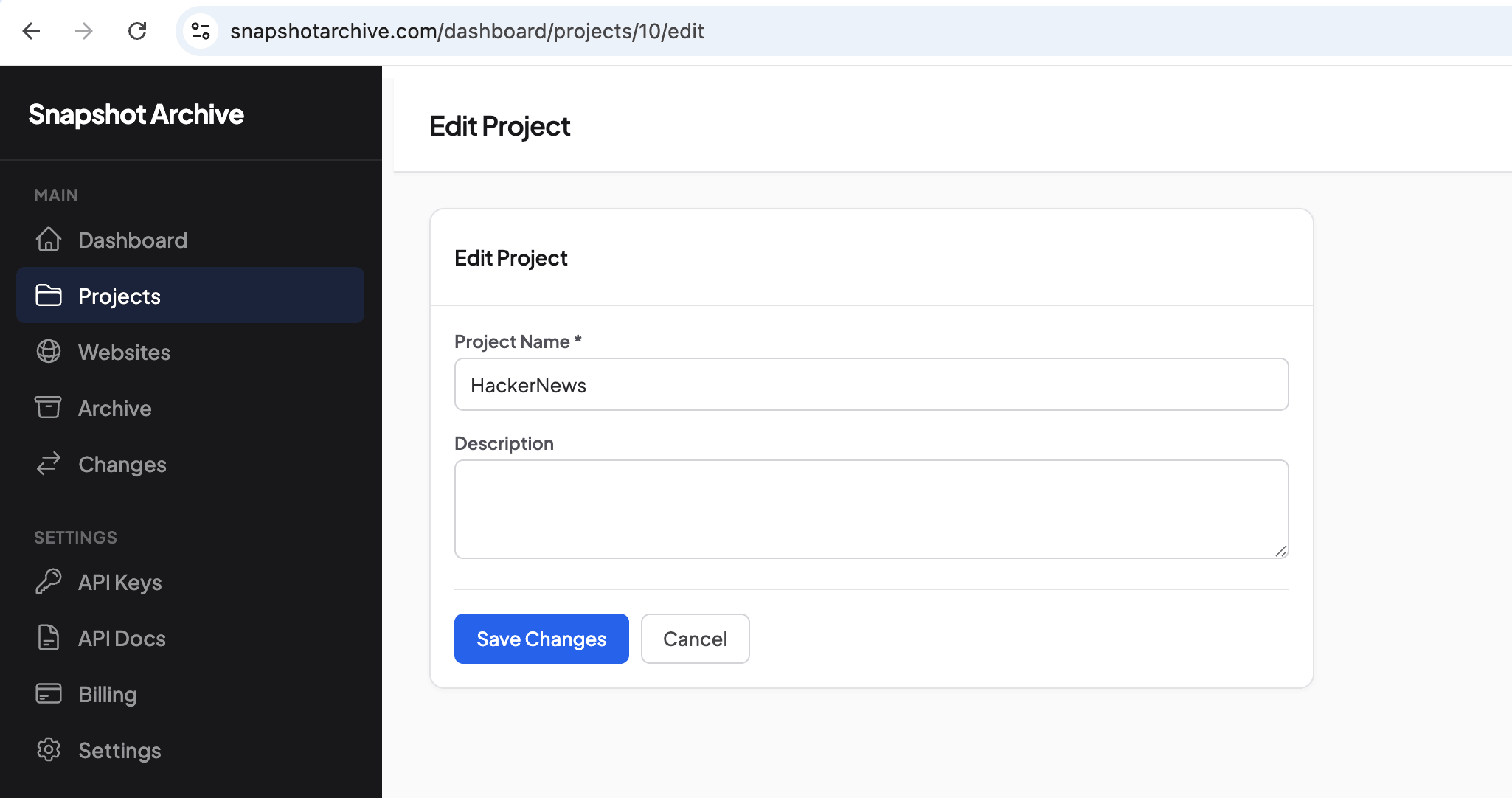

Step 2: create a project to group your URLs

Before adding websites, it's worth creating a project — a way to group related URLs together. If you're monitoring competitors, you might name the project after the competitor or after the task. For this walkthrough, we'll create one called "HackerNews" since we're going to track Hacker News as our example.

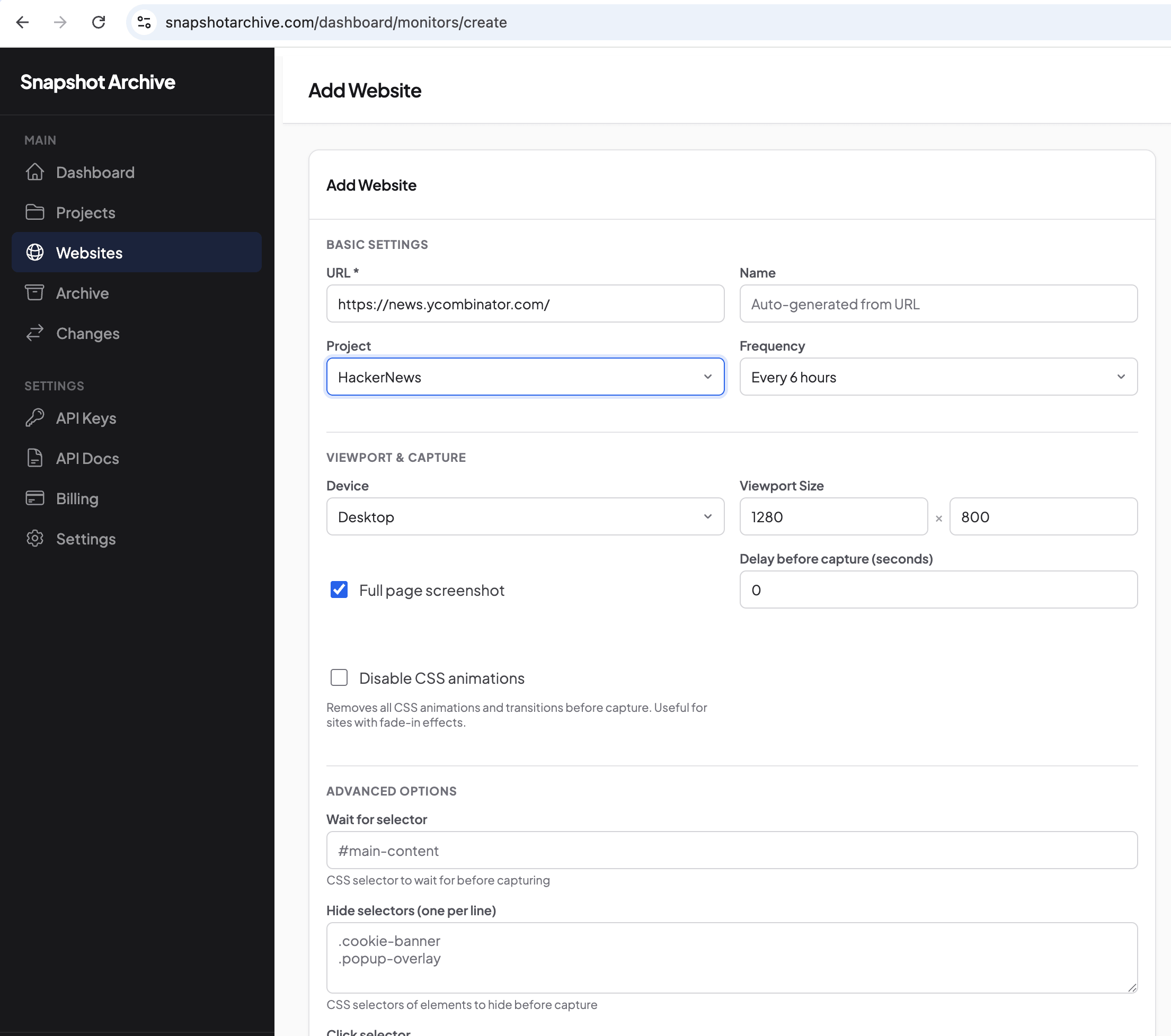

Step 3: add the website you want to monitor

Head over to the Websites section and click Add Website. In the form, enter the URL (https://news.ycombinator.com/), select the "HackerNews" project, and set the capture frequency to every 6 hours. Under Viewport & Capture, pick Desktop at 1280×800 and enable Full page screenshot so you capture everything below the fold too — we covered why full page matters for certain use cases in our comparison of full page vs viewport modes.

Take a look at the advanced settings at the bottom of the form. "Wait for selector" lets you hold off on capturing until a specific element loads — useful for SPAs and pages with lazy-loading. "Hide selectors" removes unwanted elements before capture: cookie banners, popups, support chat widgets. With manual screenshots, you'd have to close all of that by hand every single time. Here you configure it once, and the system remembers for every future capture.

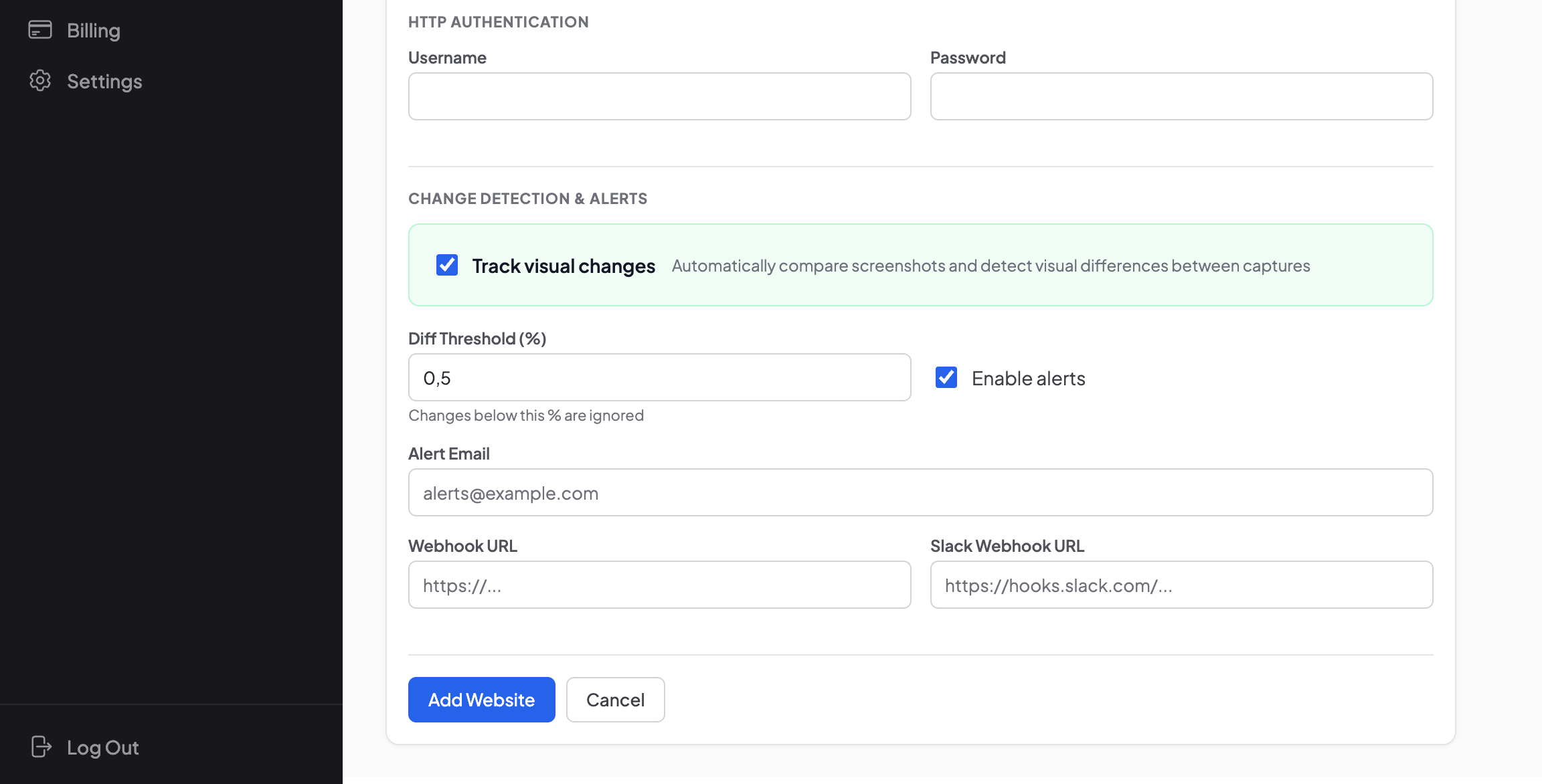

Step 4: configure change tracking and alerts

At the bottom of the same form, you'll find the Change Detection & Alerts section. Check "Track visual changes" and set the threshold — we're using 0.5% here, which means the system sends a notification if more than 0.5% of pixels differ between two consecutive captures. For pages with dynamic content (ad banners, rotating testimonials), a higher threshold like 2-3% makes more sense to avoid noise — we wrote about how to tune thresholds and reduce false positives in a separate article.

Notification channels are configured right here: email, webhook for integration with external services, and Slack for team collaboration. You can use all three at the same time.

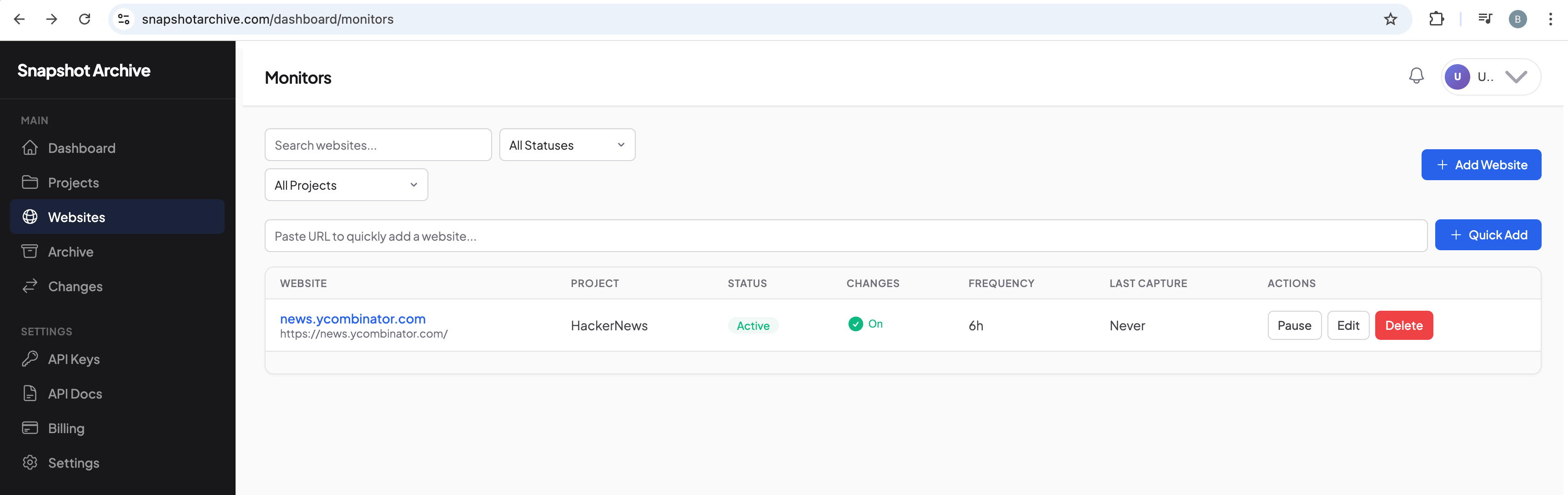

Step 5: the website is added and running

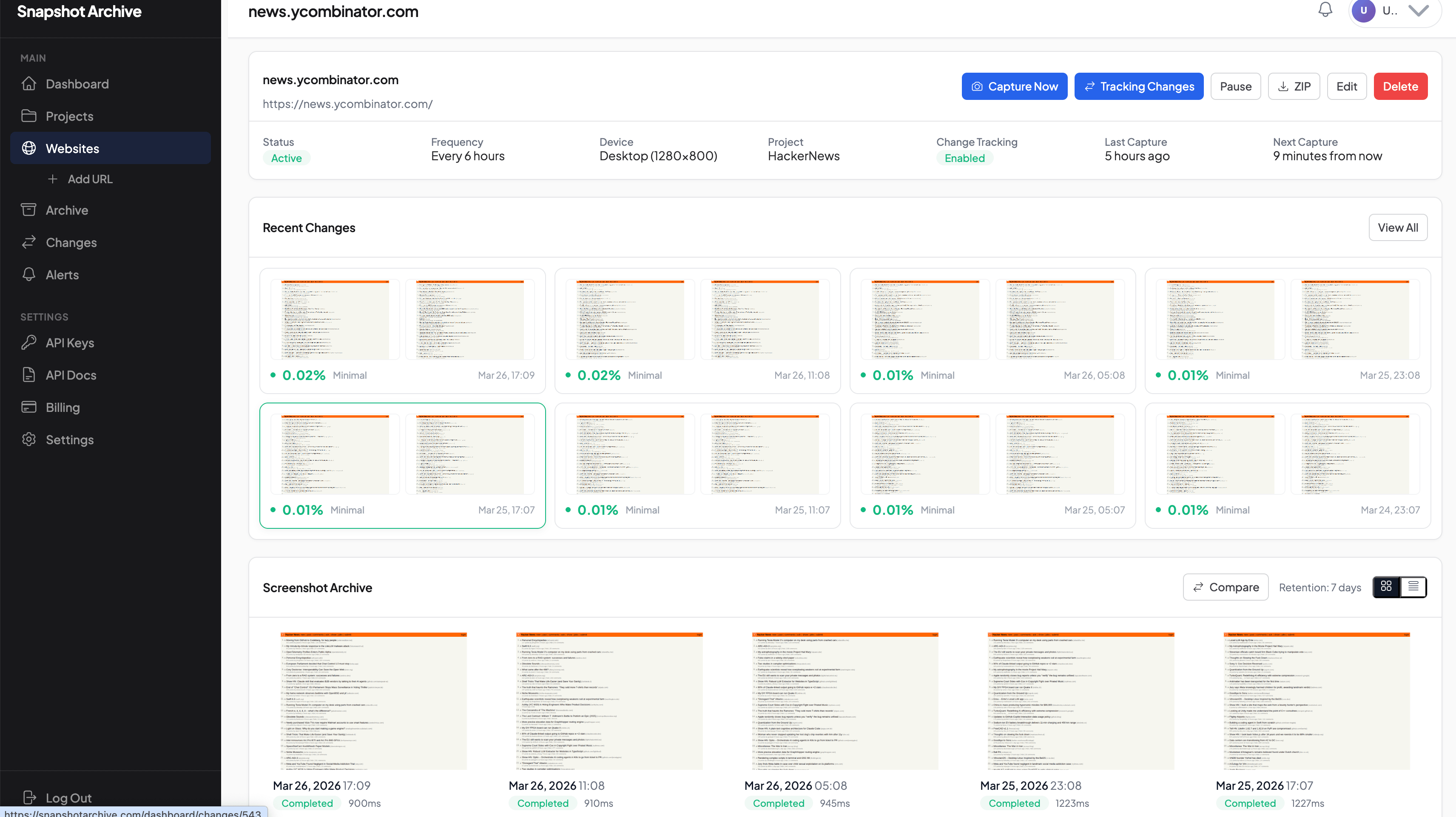

After saving, the website shows up in your monitor list. The table gives you everything at a glance: status (Active), whether change tracking is on (Changes: On), frequency (6h), and when the last screenshot was taken.

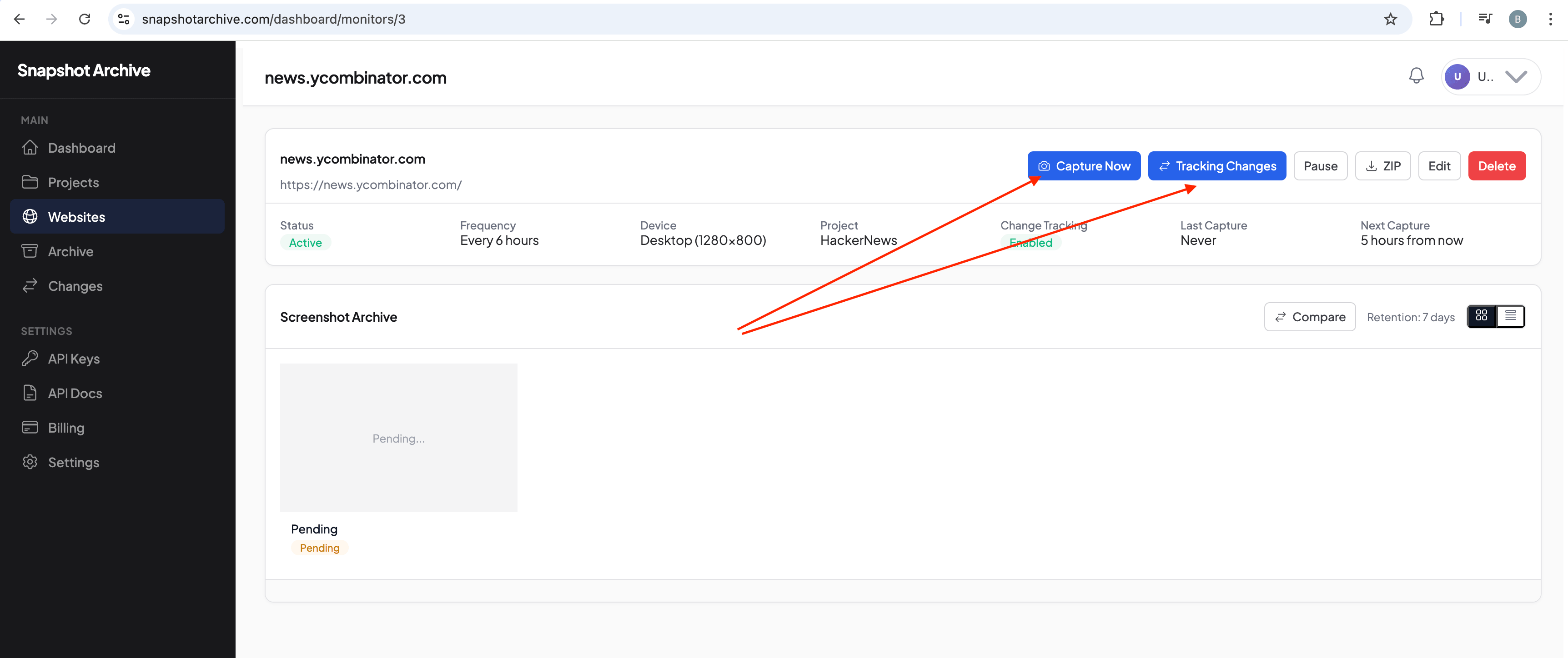

Clicking into a specific website shows the full details and controls. The "Capture Now" button takes a screenshot immediately without waiting for the schedule — useful when you've just changed settings and want to verify the result.

Step 6: the screenshot archive fills up on its own

After some time (or right after hitting Capture Now), screenshots start accumulating in the archive. The Recent Changes section shows every detected change with its percentage and timestamp, and below that sits the full screenshot archive sorted by date. Each capture records the page load time, HTTP status code, and completion status — so you're not just archiving what the page looks like, but also how it performed at that moment.

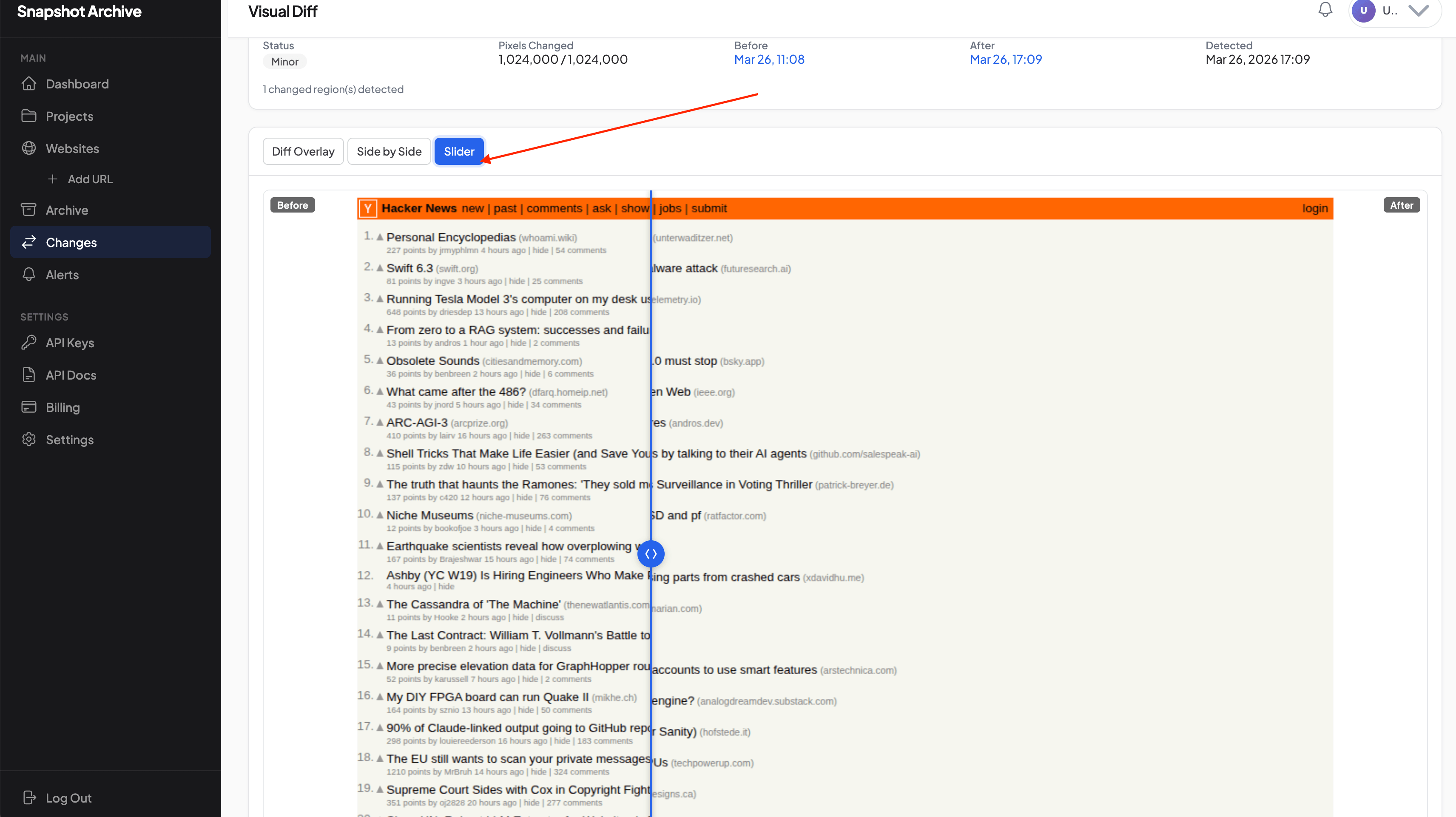

Step 7: visual comparison — three ways to see what changed

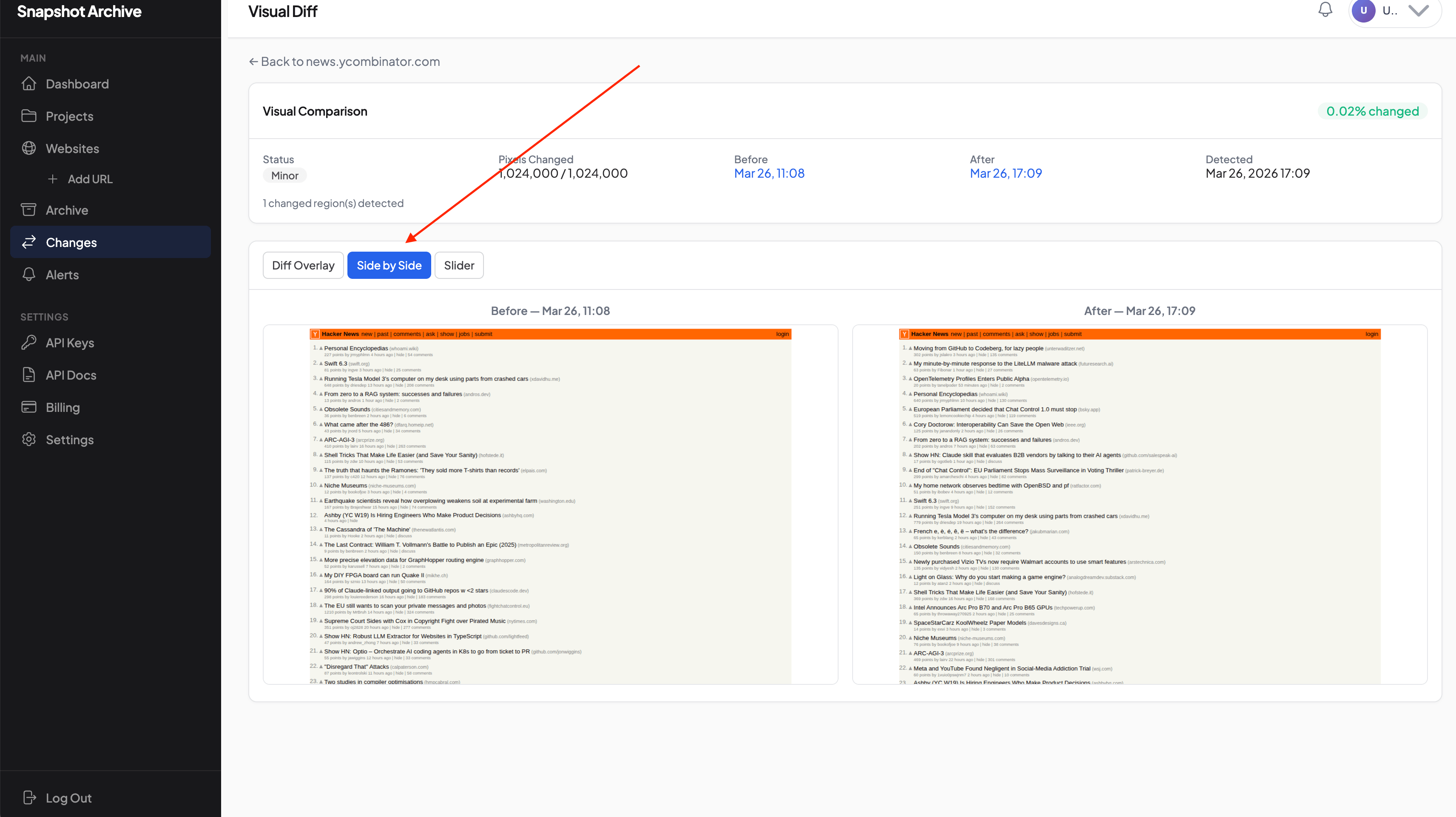

This is the step that makes the whole thing worth it. When the system detects changes, it creates a visual comparison you can view in three different modes.

Side by Side places two screenshots next to each other, labeled "Before" and "After," with the change percentage and pixel count at the top. In this example, you can see how the Hacker News front page headlines shifted between two captures six hours apart.

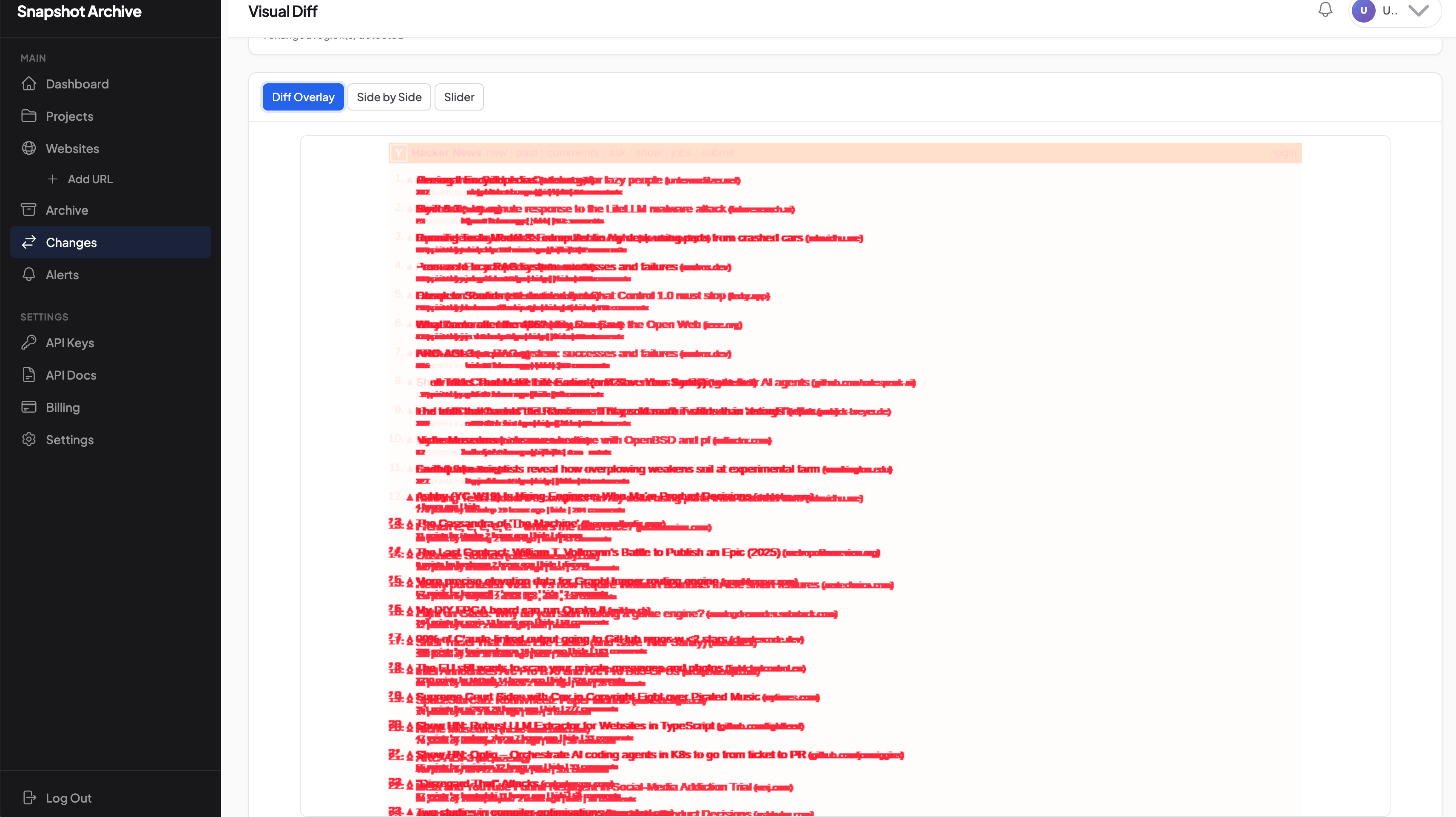

Diff Overlay highlights all changed areas in red directly on the screenshot. This mode is the fastest way to understand which parts of the page moved — no squinting, no guessing, just red marks on the exact zones where something is different.

Slider stacks the two screenshots on top of each other with a draggable handle between them. Drag it left and right to watch one version transition into the other — this is the most intuitive way to spot differences, especially on pages where the changes are subtle.

Compare that to the manual approach: opening two files, placing them side by side, trying to hold the differences in your head. These three comparison modes do that work for you, and they do it more reliably than your eyes ever could on a long page.

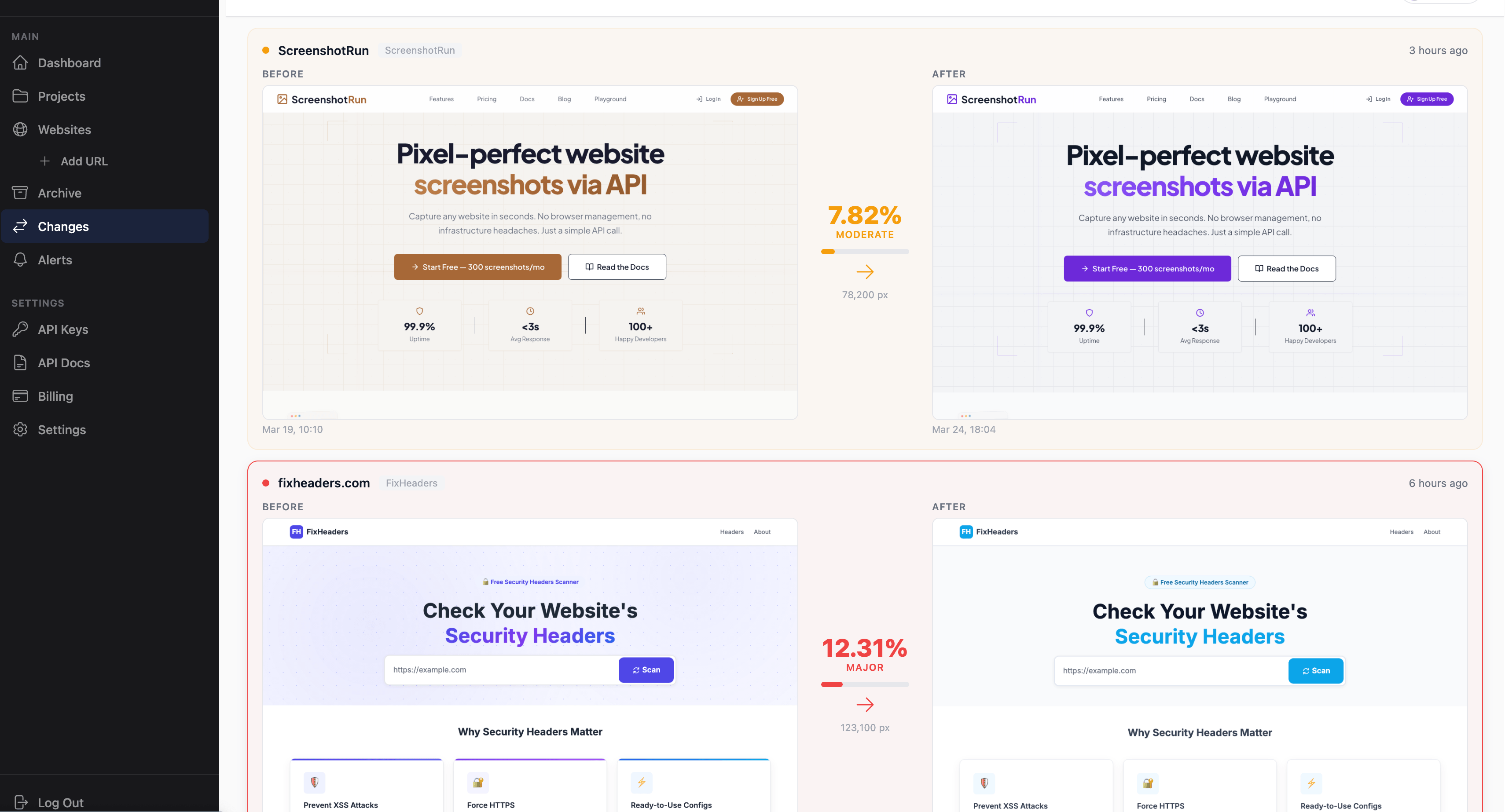

Step 8: the changes feed collects everything in one place

Every detected change is collected in the Changes section. For each monitored website, you see a pair of screenshots (Before/After), the change percentage, and a severity level — Minimal, Moderate, or Major. When you're tracking several sites at once, this feed lets you scan everything in under a minute and focus only on the changes that need attention.

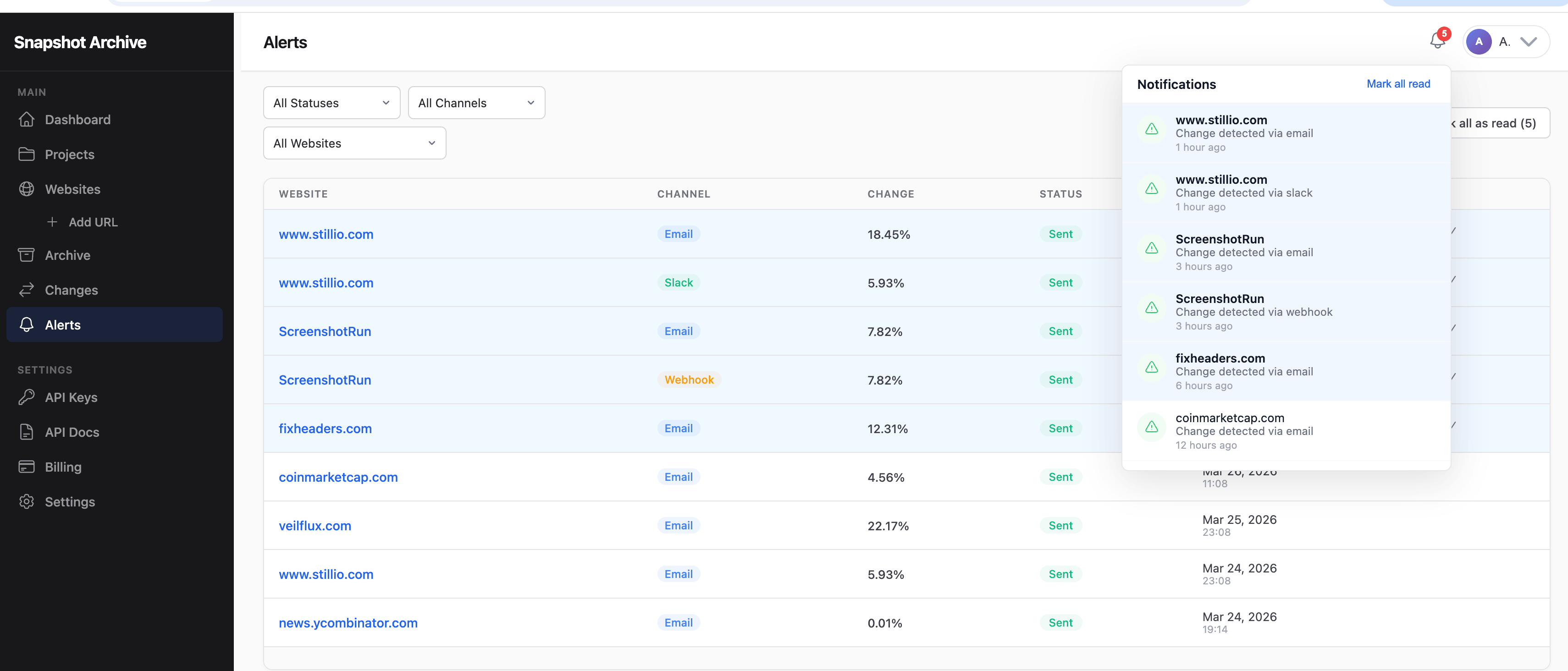

Step 9: alerts arrive without you checking anything

When changes exceed the threshold you've set, the system sends notifications automatically. All sent alerts are collected in the Alerts section, where you can see which website changed, which channel was used (Email, Slack, Webhook), the change percentage, and the delivery status. A notification icon in the top-right corner shows unread alerts — hover over it for a quick list of recent changes without leaving whatever page you're on.

What this looks like after a week of real use

The entire setup took us a couple of minutes. From that point on, the system takes screenshots every 6 hours — no weekends, no breaks, no "I forgot." Every new capture gets compared to the previous one pixel by pixel, and if something changed beyond the threshold, a notification arrives with the percentage and a direct link to the visual comparison.

Compare that to the manual way: visiting the site yourself, taking a screenshot, saving it to the right folder with the right filename, then opening the previous capture and comparing by eye. Four times a day, every day, for every URL. In practice, nobody sustains that for more than a week — and the moment you skip a day, you've introduced a gap in your archive that you can never fill retroactively.

The gap between the two approaches becomes even more obvious when you're tracking more than one website. Adding a second, third, or tenth URL to Snapshot Archive takes about a minute each. Building and maintaining a folder structure for ten websites by hand is real work — naming conventions, date formats, desktop vs mobile variants, remembering which subfolder goes where. It's the kind of overhead that makes people abandon the process entirely.

There's one more thing that manual monitoring simply cannot do: sensitivity thresholds. Your eyes can't tell the difference between a page where 0.3% of pixels changed (a timestamp updated in the footer) and a page where nothing changed at all. Automated comparison catches those micro-changes and can either ignore them — if your threshold is set to filter out noise — or flag them immediately if you're monitoring a page where every pixel matters, like a Terms of Service page where even a single word change can have legal implications.

When a manual screenshot is still the right call

We're not saying manual screenshots are useless — there are situations where they work just fine. A one-off capture for a report or a presentation. A quick competitor check before a meeting to see if anything looks different. A screenshot for your internal wiki or a slide deck. If the task is to capture one page one time, there's no reason to set up automation for it.

But if the monitoring is ongoing — if it matters that you don't miss changes, if you need to compare states across days or weeks, if you're tracking more than a couple of URLs — automation saves hours of manual work every week and catches things your eyes won't. The difference isn't marginal; it's the difference between a process that runs reliably on autopilot and one that depends entirely on someone remembering to open a browser and press a button at the right time.

Snapshot Archive's free plan covers 3 websites with daily screenshots and a 7-day archive. Add a competitor's pricing page, your own homepage, and a vendor's ToS — wait a few days, and see whether the automated comparison tells you something you would have missed. The whole process from sign-up to the first screenshot takes less than five minutes, and we just walked through every step.

Start archiving websites today

Free plan includes 3 websites with daily captures. No credit card required.

Create free account Vitalii Holben

Vitalii Holben